A New Age for Gaming: Indonesia Joins the Global Push on Child Safety

Words by Words by Safira Pusparani, Government Relations Manager, APAC

Mar 26 2026

7 mins

Indonesia just banned accounts for users under 16 across eight major platforms, including social media and online gaming platforms. For game publishers, this is not just a local compliance issue. It is another sign that child safety is becoming part of the minimum requirement for technology infrastructure and operations.

Starting 28 March 2026, Indonesia’s new child safety regulation, Ministerial Regulation No. 9/2026 (“MR TUNAS”), requires platforms classified as high-risk to disable the accounts of users under 16 years old. The first round of platforms named for early compliance are YouTube, TikTok, Facebook, Instagram, Threads, X, Bigo Live, and Roblox. The appointment of the eight platforms as “high-risk” has been further formalized via Ministerial Decree No. 140/2026. Indonesia now has a technical regulatory framework that allows the government to classify additional services, require age verification, and impose sanctions when protections are deemed inadequate.

Child Safety Is Forging the Global Rulebook

Indonesia is not acting in isolation. In shaping MR TUNAS, the Ministry of Communication and Digital Affairs drew from child safety frameworks already in place in other markets, including Australia’s Online Safety Act and the United Kingdom’s Age Appropriate Design Code.

Like its counterparts abroad, Indonesia’s framework places responsibility on platforms to anticipate risk, strengthen protections for younger users, and demonstrate that safeguards are working in practice, not just in policy.

Several major markets have already moved beyond policy discussion into active regulation.

In the United Kingdom, the Age Appropriate Design Code has been shaping how digital services handle children’s data, privacy settings, and product design since 2021, while more recently, the Online Safety Act has expanded obligations around harmful content and child protection. Broader across the European Union, the guidelines on the protection of minors under the Digital Services Act are now reinforcing similar expectations, particularly for large platforms with younger users.

Australia has gone further. In December 2025, it became the first country to prohibit under-16s from accessing social media, placing legal responsibility on platforms to prevent access and backing that requirement with penalties of up to AUD 49.5 million.

Brazil is now setting a similarly high bar. Its Digital Child and Adolescent Statute (“ECA Digital”) entered into force on 17 March 2026. Self-declared age checks are no longer sufficient. Guardian-linked accounts are mandatory for under-16s. Paid loot boxes are prohibited in services accessible to minors, bringing monetization design directly into scope.

And the pipeline continues to build. Malaysia, New Zealand, Spain, and France are all considering or advancing tighter age assurance and minimum-age rules for online platforms, while several U.S. states are pursuing similar legislation.

Taken together, these developments suggest that publishers will increasingly face comparable child-safety expectations across markets.

But what does MR TUNAS regulate?

Indonesia’s new child safety framework regulates how digital platforms operating in Indonesia protect child users. Under MR TUNAS, digital platforms (defined in the regulation as “Electronic System Providers”) are assessed based on the level of exposure they create for child users.

The scope is not limited to social media platforms. Services that enable user interaction, content sharing, live communication, or other features commonly found in modern games may fall within the same risk framework, where child safety exposure is considered significant.

Age Thresholds

At the centre of the framework are age thresholds that prohibit under-16s from accessing high-risk platforms altogether.

- Under 13: Access allowed for platform/services specifically designed to be used or accessed by children with a low-risk profile and parental consent

- Ages 13-15: Access allowed for low-risk platform/services with parental consent

- Ages 16-18: Access allowed for platform/services with parental consent

These thresholds set the baseline for how access must be controlled across different types of services..

High-Risk Platforms

Platform classification is based on seven risk indicators, including:

- Interaction with strangers

- Exposure to harmful or inappropriate content

Exploitation of children as consumers - Personal data protection risks

- Addiction and overuse

- Potential psychological harm

- Potential physiological harm

A high-risk rating in any one category may result in an overall high-risk classification, triggering stricter obligations and closer regulatory scrutiny.

For platforms in this category, expectations include credible age verification, stronger content safeguards, accessible complaint mechanisms, and enforced access restrictions for underage users.

Game publishers should also note that this framework may extend to games that feature social networking, chat, or user-generated content. Under these rules, titles with such features may be required to deactivate users’ accounts under 16.

Mandatory Self-Assessment

The regulation also introduces a formal self-assessment obligation.

Digital platforms must submit a documented risk assessment via a designated online system to the Ministry within three months of the regulation entering into force (approximately 6 June 2026).

On March 17, 2026, the Minister of Communication and Digital Affairs issued a supporting technical decree (Ministerial Decree No. 142/2026) providing guidance on how publishers will be scored in their self-assessment. Digital platforms must assess whether their products, services, or features may be accessed by children using five detailed indicators:

- Intended for children (based on terms/policies)

- Significant child user base

- Marketing directed at children

- Child-attractive design (e.g., gamification, visual UX)

- Similarity to services used by children

This means that compliance is not limited to account access alone. Features such as user interaction, reviews, or user-generated content may need reassessment. Meeting any one of the above indicators is sufficient to bring a product, service, or feature within scope under Indonesian law.

What this means for publishers

Indonesia remains one of Southeast Asia’s most important gaming markets, with a large digital population and strong engagement among younger users. This makes MR TUNAS more than a local compliance developer. As child safety expectations become more prominent across major digital markets, regulatory readiness is increasingly important for a broader market access strategy.

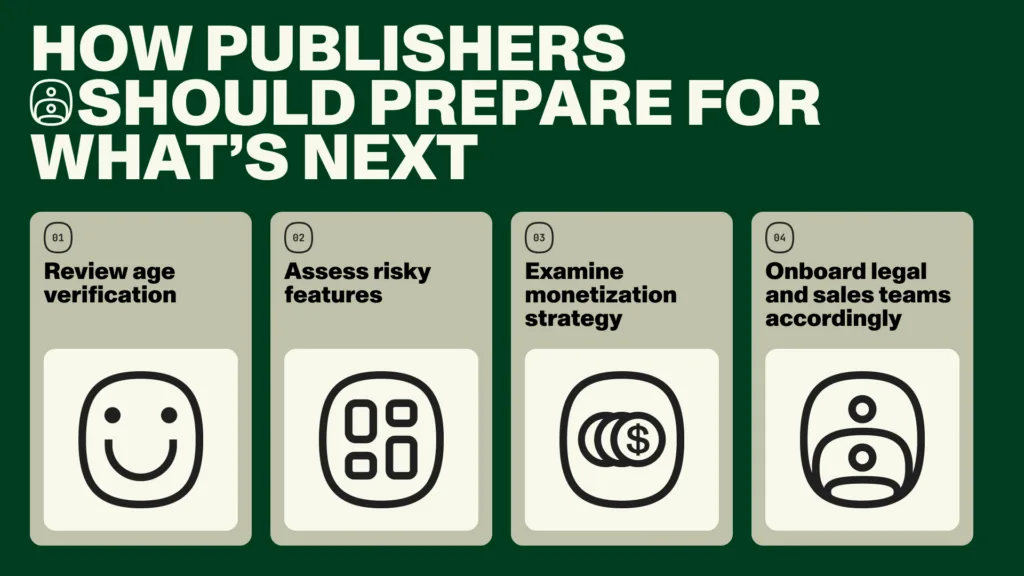

Publishers can consider the following when preparing for compliance:

1. Review how age is currently handled in your product.

Across multiple markets, regulators are moving beyond self-declared age checks and asking platforms to show that safeguards are credible in practice. Even where technical standards are still evolving, publishers should already understand where age is collected, how that information is used, and whether existing controls would withstand closer regulatory scrutiny.

2. Identify features that may attract scrutiny.

Regulators are increasingly focused on how products function, not how they are labelled. Features such as chat, user reviews, guild systems, user-generated content, and recommendation tools can all be relevant among younger users.

Publishers should understand where age data is collected, how it is used, and whether existing controls can withstand closer scrutiny.

3. Reassess monetization design.

Brazil has already shown how quickly child safety regulation can extend into commercial design, particularly where paid loot boxes or similar mechanics are accessible to minors.

Even in markets where direct restrictions do not yet exist, monetization features are increasingly part of the broader regulatory conversation.

4. Coordinate early across teams before the legal enforcement date

Indonesia requires providers to complete a self-assessment against child-related risk indicators, and similar expectations are emerging elsewhere.

Publishers that already understand where potential exposure sits across product, legal, and operational teams will be in a stronger position if requirements tighten further.

Indonesia may be one of only a few markets to adopt strong child safety measures so far, but the direction is already clear. Child safety expectations are becoming part of how markets define responsible platform design, particularly where younger users are active, and platform features extend beyond core gameplay.

The question is no longer which markets will tighten their rules, but rather, which platforms will be ready when they do.

Related Posts

© 2026 Coda Payments Pte. Ltd

Site Credits